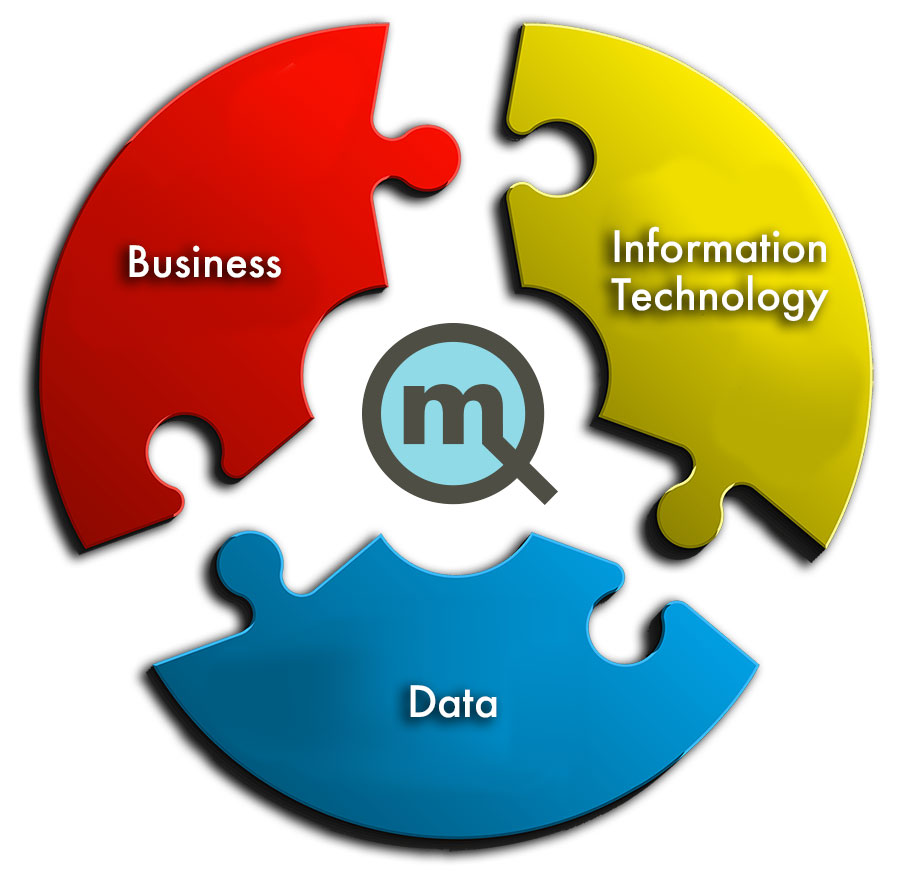

Quantified Mechanix operates at the place where business, technology and data meet. We are equally talented, and exceptionally competent working with business leaders and IT to use technology to create real opportunities for the business. We turn data into information and help the business use it to develop the insights that spur the actions that create real results.

Pain points. The biggest barriers companies face in extracting value from data and analytics are organizational. Many struggle to incorporate data-driven insights into day-to-day business processes because they do not have the right talent. The right talent is not only data experts, but people in the business who combine adequate data skills with industry and functional expertise.

Analytics is interdisciplinary and multidisciplinary. There are many specialized skills required to deliver effective analytics results. Some of these skills are network, security, various software applications, database, data architecture, and software development with niche tools like ETL. In most organizations, these skills are spread across several people on different teams, if they exist at all, and frequently analytics is not their primary function or experience. For example, a database administrator may be very skilled, but if their background is managing databases for transactional applications, even the basic approaches to performance tuning for analytics may seem foreign.

Analytics is a unique use case that IT struggles to deliver:

- Requires skill sets that are rare and in demand.

- Requires functional understanding of source applications, processes and data.

- Users expect an unusual degree of performance, capability and flexibility.

A DBA may spend 25% of their time on routine disaster recovery related tasks, 25% on administration and maintenance, 25% on monitoring transactional systems and the remaining time on everything else, which includes analytics. In this common scenario, it is difficult to become excellent at supporting analytics. For the DBA, supporting analytics means understanding the data integration and ETL processes that require throughput and understanding the analytics across the spectrum of users and usage patterns (ad hoc, production reports, exploration). Managing performance in these cases is sometimes in conflict, but in order to be successful, all of these capabilities must meet user expectations of performance which are increasingly very demanding.

The IT/Business partnership is often not effective:

- The business struggles to develop insights that can be turned into action because IT is unresponsive.

- IT struggles to support the business because the business does not know what it wants.

Similarly, requirements from the business for analytics are different. In some ways, they are less defined and discrete and to IT, it looks like the business does not know what it wants. The projects themselves are not discrete, but are best orchestrated as dev/ops teams continuously working to explore, discover and deliver constantly improving capabilities to the business. The business needs a platform where they can experiment, explore and deploy, which requires layers of security, capability and flexibility managed and delivered in an integrated way that the IT organization is not accustomed to providing.

IT struggles to support meaningful exploration because its competencies are siloed.

- Data, analytics software and infrastructure are separate disciplines.

- Staff in each discipline have limited experience with the others.

- IT does not have adequate understanding of the business

Traditional IT is organized around specific disciplines like server and network administrators, database administrators, applications, and security. Meanwhile, analytics cuts across these disciplines, and when organizing to deliver analytics, this requires large teams with varying levels of expertise, experience and engagement with the goal. This results in weak, disconnected links, slow grinding progress, frustration and failure. When data volumes become large, or some of the applications are in the cloud the challenges grow exponentially and the problem gets even worse.

Often the business tries to solve the problem with manual processes and spreadsheets.

- Time consuming to create and manage.

- Not timely and error prone.

- Not secure.

The most common solution to these problems I see organizations attempt is spreadsheets. Often small teams of users, sometimes just one person in finance begin extracting the data they need from reports within various applications. The process is time consuming. Over time, the spreadsheet evolves to include summaries, complex functions and other methods to integrate data from multiple systems or perform calculations. Sometimes the complex web breaks and it takes considerable effort and time to fix it. Other times minor errors are introduced that go undetected. Because it takes so long to assemble the data, the business is unable to use this information in a timely way to improve results. As the errors accumulate, trust in the numbers declines, and the business lacks the confidence to make clear, informed decisions.

Without confidence the business stagnates.

- Change is too risky.

- Lack the agility to explore and confidence to experiment.

This is not the way to innovate. The safest decision is “no decision” in this environment. Without timely, trusted data changing course is a risky proposition. And a time consuming, error prone process makes data exploration exceptionally difficult. By the time an analyst has an answer, they quite literally have forgotten the question. The business needs an analytics platform that not only answers the first question quickly, but is able to answer the next question as if it was designed to do so.

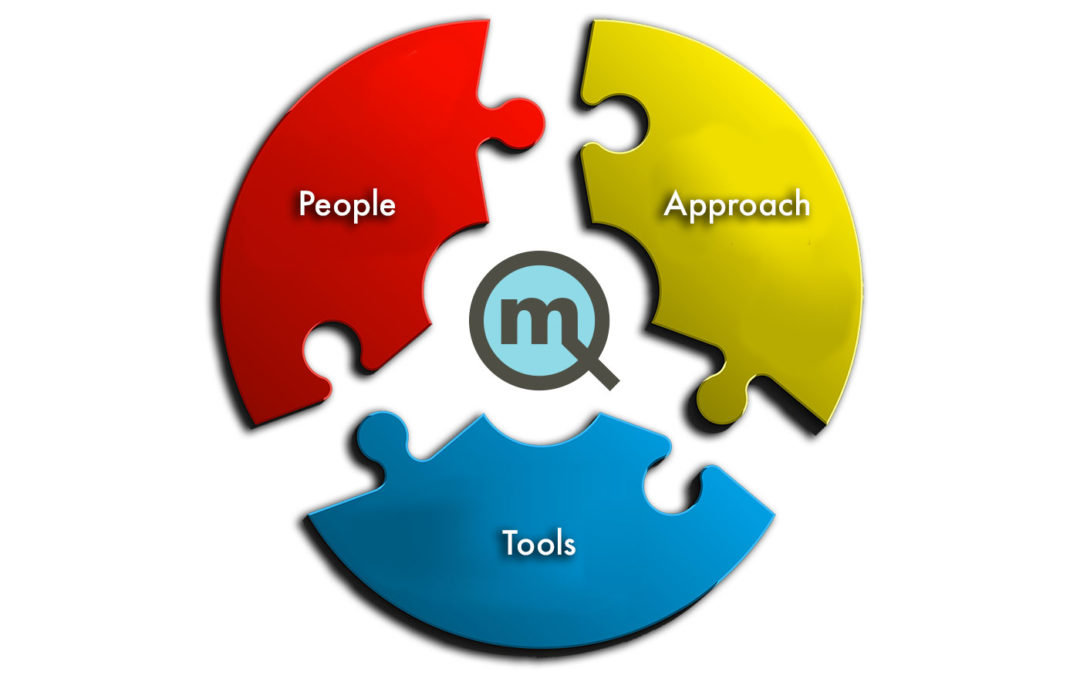

How we solve this dilemma.

- People: Small teams with cross disciplined IT skills and experience with analytics.

- Approach: Governed, flexible data models with self service for a variety of users.

- Tools: An analytics platform that supports flexibility to explore, discover and deploy.

The right people and skills create clarity and action. The first and most important step is to start with the right people. At Quantified Mechanix, our goal is to create the smallest team possible. This has the benefit of reducing coordination and communication costs of a larger team, while inherently setting up the team to choose the right place to solve key issues. We see it again and again where teams deliver data to the reporting team that doesn’t seem to have any basis in what the reporting team needs in order to deliver to the business. Avoid this scenario by not letting any part of the team throw their part over the wall to the next part of the process. An agile approach with iterations that encompass all parts of the solution and involves the business is the natural way to guard against this problem.

The most critical skill set is data and data integration.

- Identifying source data and mapping it into useful analytics.

- Designing analytics data models to support exploration, discovery and deployment.

Working with small teams means finding or developing people with skills in more than one key area. Perhaps the data integration leader has significant database experience, or depth with one or more of the key applications that run parts of the business. One area we think is critical is knowledge of source applications, how to access them, and how to map the information requirements back to the application database. This need is one of the reasons why the data and data integration layer of analytics represents 80% of the effort needed to succeed. Having access to these critical resources, especially if they have experience with data integration, reporting or analytics can make a significant difference.

Cloud based applications create risk and complexity

- Access to data is more challenging.

- Access to documentation or expertise to understand the data may be an issue.

As more and more applications move to the cloud and are effectively managed by a third party, access to and understanding application data is becoming a risk that organizations need to be prepared to manage. Though each application commonly comes with reporting capabilities, we hear frequently that they are not sufficient. Furthermore, analytics almost always requires data from more than one application in order to be meaningful. That seems like a problem that can only be solved by integrating the applications and business leaders should take care to have a plan to handle this. It seems logical to make sure that the applications they choose to adopt should provide documentation and allow access to the data, but be careful and thorough. Analytics commonly requires access to the database, and application providers commonly provide access to the data not through a database connection, but via an API. An API is not a natural fit for data integration in the traditional way done with an ETL engine. Depending on the volume and method used to extract data an API may work, but this approach is less common.

Integration with the business and IT creates a better partnership and better analytics.

- Business and functional expertise in IT, not just the project manager.

- Data and technical understanding in the business.

- Common understanding of goals and objectives from both sides.

This illustrates the need for the business and IT to be integrated. It is becoming cliche that the business goes off and licenses an application in the cloud to run the business without understanding some of these issues, and then becomes frustrated when it’s difficult to address the need for data from those applications and integrating it into the analytics environment. But the need for closer integration goes far beyond this scenario. In the old days, the business would go to IT with report requests and IT would take weeks to deliver. By that time the need was no longer present and countless cycles were spent this way.

Meeting the business where it’s at so it can do more.

- Enable the business, don’t just satisfy them: Self Service.

- Make the business capable, not dependent: Flexible Models, high performance.

With the right approach, modern analytics tools can provide a user friendly interface to the business, so that more of their requests can be met with self service.The right approach is usually something like a collection of atomic level dimensional data marts that fit together in logical ways. This approach also does a good job of delivering governed, quality data that a self service environment demands, and also provides many logical places to apply robust security. The discipline of delivering a self service environment also positions the team well for deploying more advanced reporting and analytics. All of the work to meet performance expectations, quality, integration, and a user friendly data model pays even greater dividends to enable power users to deliver advanced capabilities or deploy analytics to hundreds of users. A well designed and well managed data model supports self service and exploration by the business, removing the IT bottleneck and enabling meaningful exploration, discovery and analytics.

Final piece of the puzzle: Capabilities and tools, designed to deliver analytics together.

- Netezza: An database appliance designed for analytics workloads.

- Infosphere DataStage: A world class data integration platform.

- Cognos Analytics: An industry leading analytics platform.

Once the right people are in place and you have the right approach, the last piece is an integrated platform that provides the components you need to deliver analytics. Depending on your specific needs, that could include an analytics database, a data integration, and an analytics platform that includes a wide range of capabilities with a common data governance and security layer.

Netezza Integrates database, server, storage and analytics into a single system with petabyte scalability. Provides a high-performance, massively parallel system that enables you to gain insight from your data and perform analytics on very large data volumes.

- Easy to deploy, manage and own on premise or in cloud.

- Combines database, server and storage in one system designed for fast analytics.

- Extremely fast reports, dashboards and visualizations over massive data volumes.

- Easy to administer for analytics – it is designed for these workloads.

- Extremely fast ETL data loads, and unequaled performance with DataStage.

- Easy Failover, redundancy and disaster recovery built into the hardware or the cloud

Netezza is designed and built for analytics delivering incredibly fast database performance for analytics workloads – both loading and queries for reporting and analytics. Fast load performance makes more robust and timely batch loads, easier support and recovery and easier ETL development. On the analytics side, fast performance makes exploring and investigating data easier as the data responds as fast as you can ask questions.

DataStage is a purpose built data integration platform that provides an Intuitive ETL development environment. This creates efficiency for the development team and increased capabilities as a result of better data integration, data quality and timely access to data. Predefined stages for common tasks reduces code, coding time, and makes jobs easier to develop and support.

- Massive throughput based on cutting edge parallel engineering.

- Feature rich environment of a mature and modern platform

- Integration with related tools for extensive capabilities –

- Cognos Analytics

- Planning Analytics

- SPSS Advanced Analytics

- Master Data Management

- Data Quality

- Significant ROI to business based on capability, and efficiency for the development team

DataStage is a powerful data integration platform. It is uniquely architected to deliver massive throughout, and when combined with Netezza and Cognos Analytics it is even faster and more capable. Fast load performance makes more robust and timely batch loads, easier support and recovery, easier ETL development.

Cognos Analytics is an analytics platform that includes self service, data exploration, artificial intelligence, visualizations, and sophisticated reporting and analytics capabilities. These capabilities are built on top of a common metadata layer to provide the common data interface often called “the single version of the truth”. Cognos Analytics allows you to automate manual and error prone reportingdone in spreadsheets and quickly/seamlessly explore, discover and capture insights and deploy them to users securely across the enterprise. Cognos Analytics is designed to deliver scalable performance with ease of deployment and ease of use.

- Scalable enterprise analytics platform so you do not need to support many applications

- Ease of use for simple users and powerful capabilities for advanced users.

- Data exploration and Discovery to help you find insights

- Ad hoc access for a wide variety of users so that users can self serve

- Professionally authored reports so you can publish high quality content

- Scalable enterprise analytics platform with secure data and deployment so you can deploy content to a large number of users securely

- Flexible deployment options – on premise or cloud to fit into your chosen strategy

- Assisted advanced analytics and machine learning to help you explore new capabilities, find new insights and uncover more value in your data

Self Service saves time by enabling the business to meet many of its needs easily, removes the need to develop and communicate requirements, and allows the business to try things without involving IT. IT becomes a force multiplier, rather than a bottleneck, enabling the business to explore and develop insights, and then deploy them to the organization on a scalable governed platform integrated with security.

The business is enabled to explore, discover and innovate.

- Change is no longer risky, but measured and considered..

- Business has the agility to explore and the confidence to experiment.

This is the way to innovate. The biggest risk is never taking one in this environment. With timely, trusted data, changing course is a much less risky proposition. And an automated, trusted process makes data exploration much easier. By the time an analyst has a question, they quite literally have the answer. The business needs an analytics platform that answers their questions quickly, in order to discover the insights that can make a difference.

Your analytics environment depends upon a powerful collection of tools that run key parts of your analytics platform. We offer the missing element of seamless integration and a deep stack of advanced technology that understands your business. With Quantified Mechanix on your team, you have the tools and experience to create analytics and applications that help you achieve industry leading business performance. We’ll help you cross the goal line.

Quantified Mechanix has technical capabilities and tools, all focused on improving results with analytics.

- Database: Database design, performance, security.

- Data Integration: ETL, master data management, data governance.

- Analytics: Visualizations, production reporting, self service, ad hoc.

- Environment: Expertise deploying on premise and in the cloud.

- Security: Designed/delivered robust and secured applications in the most demanding environments (HIPPA, DOD, Financial Services)